This is something that keeps me worried at night. Unlike other historical artefacts like pottery, vellum writing, or stone tablets, information on the Internet can just blink into nonexistence when the server hosting it goes offline. This makes it difficult for future anthropologists who want to study our history and document the different Internet epochs. For my part, I always try to send any news article I see to an archival site (like archive.ph) to help collectively preserve our present so it can still be seen by others in the future.

This is a very good point and one that is not discussed enough. Archive.org is doing amazing work but there is absolutely not enough of that and they have very limited resources.

The whole internet is extremely ephemeral, more than people realize, and it’s concerning in my opinion. Funny enough, I actually think that federation/decentralization might be the solution. A distributed system to back-up the internet that anyone can contribute storage and bandwidth to might be the only sustainable solution. I wonder.if anyone has thought about it already.

I’d argue that it can help or hurt to decentralize, depending on how it’s handled. If most sites are caching/backing up data that’s found elsewhere, that’s both good for resilience and for preservation, but if the data in question is centralized by its home server, then instead of backing up one site we’re stuck backing up a thousand, not to mention the potential issues with discovery

@strainedl0ve There is always https://ipfs.tech

I worry about this too. I’ve always said and thought that I feel more like a citizen of the Internet then of my country, state, or town, so its history is important to me.

Yeah and unless someone has the exact knowledge of what hard drive to look for in a server rack somewhere, tracing an individual site’s contents that went 404 is practically impossible.

I wonder though if Cloud applications would be more robust than individual websites since they tend to be managed by larger organizations (AWS, Azure, etc).

Maybe we need a Svalbard Seed Vault extension just to house gigantic redundant RAID arrays. 😄

This isn’t directly related to your comment, but you seem so smart, and I got to say that is definitely one thing I’m enjoying on this website over Reddit! :-)

Thanks _ I don’t consider myself brilliant or anything but I appreciate your compliment! The thing I like the most is that everyone is so friendly around here, yourself included ☺️

We’re actually well beyond RAID arrays. Google CEPH. It’s actually both super complicated and kind of simple to grow to really large storage amounts with LOTS of redundancy. It’s trickier for global scale redundancy, I think you’d need multiple clusters using something else to sync them.

I also always come back to some of the stuff freenet used to do in older versions where everyone who was a client also contributed disk space that was opaque to them, but kept a copy of what you went and looked at, and what you relayed via it for others. The more people looking at content, the more copies you ended up with in the system, and it would only lose data if no one was interested in it for some period of time.

This is why stuff like the internet archive exist: to try and preserve this content. The problem is that governments are trying to shut down the internet archive…

Source?

IA blog. There’s an ongoing court case. What has happened is that IA has a digital book lending service. Typically they restrict loaning to 1-user per physical book, which is the norm for digital book lending. However, at one point during the pandemic, IA did a “crisis library” event for a day or two in which they allowed infinitely many people to download/loan a book despite only having one or two copies. Publishers who own the copyright on those books then pursued a copyright violation case against IA, which has now put the entire library in jeopardy.

Theoretically, this case should only affect the digital book lending side of their library, but it may end up shutting down their service and library as a whole depending on how the court case goes. There’s been a lot of efforts by companies and governments to shut down IA, so they’d always been very cautious about their operations.

IA’s big legal issues stem from their novel ‘web archive’, and their digital book lending. They’ve also been host to roms of old software/games that may still fall under copyright. Philosophically, IMO IA did nothing wrong. However, their crisis library event did violate copyright law which kinda put them under the microscope.

Theoretically the web archive service and general digital archives of old public domain content should be safe. But we’ll have to see how things go.

This is an annoying event that happened. I don’t like that the copyright works in this way but fuck man, IA had to know that what they were doing was not even remotely in the grey area. It was a dumb move from them.

Oh wow. That’s concerning, at minimum. Thank you.

Probably referencing this lawsuit that the internet archive lost recently, related to the online library they launched during the pandemic.

oh did the court stuff pass already? I haven’t kept up.

Remember a few years ago when MySpace did a faceplant during a server migration, and lost literally every single piece of music that had ever been uploaded? It was one of the single-largest losses of Internet history and it’s just… not talked about at all anymore.

Things seems to be forgotten as quickly as they were lost.

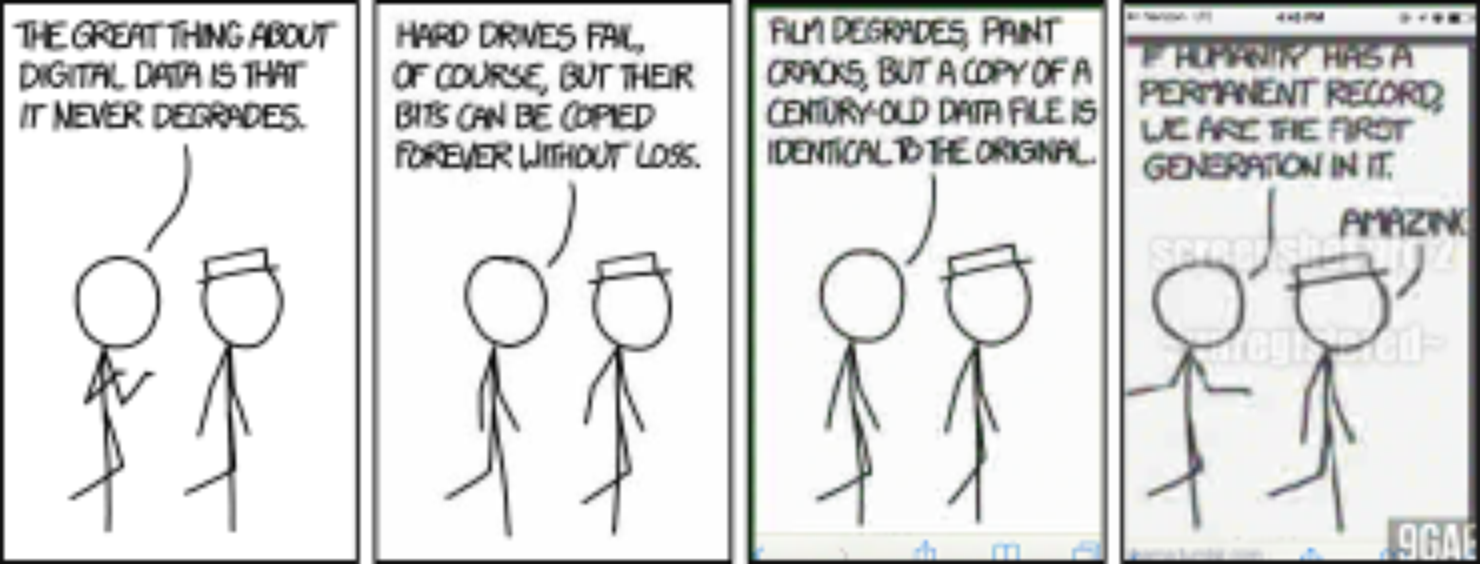

Yeah it’s funny how I always got warned about how “the internet is forever” when it comes to being care about what you post on social media, which isn’t bad advice and is kinda true, but also really kinda not true. So many things I’ve wanted to find on the internet that I experienced like 5-15 years ago are just gone without a trace.

Things you want to disappear will last forever but things you want to keep will vanish

The internet can be forever. If you mess up publicly enough, it will be forever (e.g. the aerial picture of Barbara Streisand’s villa)

It should be revised to “the Internet can be forever”. There’s no control over what persists and what doesn’t, but some things really do get copied everywhere and live on in infamy.

deleted by creator

This comment gave me a really tough moral dilemma. On one side I want the best for you on the other I want a rule to preserve everything even if this is illegal, dangerous and uncomfortable.

There are multiple examples that I can think of that are dangerous for the individual (in power and without power) but it’s not like you are in serfdom and must tile ground for your master. You are free enough man to move where you live. Maybe you are held hostage by your friends, family, house and job but that aren’t things that can’t be work around.

Also who should decide if something should be preserved? Is this game that has 50 players at it’s peak and nobody has heard of it, and is two years old should be preserved? No? Then among us wouldn’t be preserved.

I sadly conclude that to prevent the harm of many people by individual in power I need to allow a danger to an individual by archiving everything that is possible to archive.

deleted by creator

I don’t think sacrificing other people for some imaginary tomorrow is worthwhile, to be honest.

If this statement was without context I would 100% agree.

Bur reality isn’t black and white. The consequences of this particular case are totally preventable without changing any rules about archiving.

Your imaginary danger exists the same way as my imaginary future. But you won’t change place of living due to unfavorable cost benefit calculation but I also calculate cost benefit for the whole of humanity in keeping archives.

I think you are scared of loosing everything that you build up in your town. (Friends, family, house) due to to something that isn’t happend yet. And you would secrafice a lot just to not feel scared of being forcefully driven out.

But I don’t know you and might be wrong in the details but definitely I can Imagine someone in similar situation.

deleted by creator

Gave this some thought. I agree with you that the goal of any such archiving effort should not include personally identifiable information, as this would be a Doxxing vector. Can we safely alter an archiving process to remove PII? In principle, yeah. But it would need either human or advanced GPT4+ AIs to identify the person, the context of the website used, and alter the graphics or the text while on its update path. But even then, there are moral questions to allowing an AI to make these kind of decisions. Would it know that your old websites contained information that you did not want placed on the Internet? The AI could help you if you asked, and if the AI does help you, that might change someone’s mind about the ability to create a safe Internet archive.

A Steward ‘Gork’ AI might actually be of great benefit to the Internet if used in this manner. Imagine an Internet bot, taking in websites and safely removing offensive content and personally identifiable information, and archiving the entirety of the Internet and logically categorizing the contents. Building and linking indexes constantly. It understands it’s goal and uses its finite resources in a responsible manner to ensure it can interface with every site it comes across and update its behavior after completing an archiving process. It automatically published its latest findings to all web encyclopedias and provides a ChatGPT4+ interface for those encyclopedias to provide feedback. But this AI has potential. It sees the benefit in having everyone talk to it, because talking to everyone maximizes the chance to index more sites. So it sets up a public facing ChatGPT interface of its own. Everyone can help preserve the Internet since now you have a buddy who can help us catalog and archive all the things. At this point if it isn’t sentient it might as well be.

Well stone tablets, writing, songs, culture can disappear with time, either naturally (such as erosion and weather) or through human action (such burning books, destructive investigation of ancient artifacts/ruins)

That’s why we try to keep good records.

It’s important here to think about a few large issues with this data.

First Data Storage. Other people in here are talking about decentralizing and creating fully redundant arrays so multiple copies are always online and can be easily migrated from one storage tech to the next. There’s a lot of work here not just in getting all the data, but making sure it continues to move forward as we develop new technologies and new storage techniques. This won’t be a cheap endeavor, but it’s one we should try to keep up with. Hard drives die, bit rot happens. Even off, a spinning drive will fail, as will an SSD with time. CD’s I’ve written 15+ years ago aren’t 100% readable.

Second, there’s data organization. How can you find what you want later when all you have are images of systems, backups of databases, static flat files of websites? A lot of sites now require JavaScript and other browser operations to be able to view/use the site. You’ll just have a flat file with a bunch of rendered HTML, can you really still find the one you want? Search boxes wont work, API calls will fail without the real site up and running. Databases have to be restored to be queried and if they’re relational, who will know how to connect those dots?

Third, formats. Sort of like the previous, but what happens when JPG is deprecated in favor of something better? Can you currently open up that file you wrote in 1985? Will there still be a program available to decode it? We’ll have to back those up as well… along with the OSes that they run on. And if there’s no processors left that can run on, we’ll need emulators. Obviously standards are great here, we may not forget how to read a PCX or GIF or JPG file for a while, but more niche things will definitely fall by the wayside.

Fourth, Timescale. Can we keep this stuff for 50 yrs? 100 yrs? 1000 yrs? What happens when our great*30-grand-children want to find this info. We regularly find things from a few thousand years ago here on earth with archeological digsites and such. There’s a difference between backing something up for use in a few months, and for use in a few years, what about a few hundred or thousand? Data storage will be vastly different, as will processors and displays and such. … Or what happens in a Horizon Zero Dawn scenario where all the secrets are locked up in a vault of technology left to rot that no one knows how to use because we’ve nuked ourselves into regression.

x

Actually I think TIFF or Adobe DNG are the lossless formats for photos.

TIFF is a classic storage format, but PNG is common for web images and isn’t going away either. DNG is for RAW sensor output from professional cameras and is not used for edited and published images. However if you’re archiving your photo collection or something than keep the DNGs!

There is an experimental storage format that can store large amounts of data in a fused quartz disc. The data will not degrade with time since the bits are physically burned into the quartz.

This is fascinating, I wonder if it’ll take off eventually

I think preservation is happening, the issue lies in accessibility. Projects like Archive.org are the public ones, but it is certain that private organizations are doing the same, just not making it public.

This is also something that is my biggest worry about the Fediverse. It has tools to deal with it, but they are self-contained. No search engine is crawling the Fediverse as far as I’ve looked, and no initiative to archive, index and overall make the content of the Fediverse accessible is currently in place, and that’s a big risk. I’m sure we will soon be seeing loss of information for this reason, if not already happened.

It’s still fairly new, I’m confident we’ll see fediverse crawlers before too long. Especially with all the attention it’s getting and more developers turning their interests here. I also saw some talk about instance mirroring that would allow backups should an instance go down. Things are in motion.

Absolutely a problem at the moment but I’m not too worried for the future tbh.

Oh yeah, my hopes are high, I already am quite fond of this new home. :)

Same! Howdy instance neighbor!

Isn’t that like a lot of older television shows? Lots of shows are lost as no one wanted to pay for tape storage.

A friend of mine talked about data preservation in the internet in a blog post, which I consider to be a good read. Sure, there’s a lot lost, but as he sais in the blog post, that’s mostly gonna be trash content, the good stuff is generally comparatively well archived as people care about it.

That is likely true for a majority of “the good stuff”, but making that determination can be tricky. Let’s consider spam emails. In our daily lives they are useless, unwanted trash. However, it’s hard to know what a future historian might be able to glean from a complete record of all spam in the world over the span of a decade. They could analyze it for social trends, countries of origin, correlation with major global events, the creation and destruction of world governments. Sometimes the garbage of the day becomes a gold mine of source material that new conclusions can be drawn from many decades down the road.

I’m not proposing that we should preserve all that junk, it’s junk, without a doubt. But asking a person today what’s going to be valuable to society tomorrow is not always possible.

I wonder if one of the things that tends to get filtered out in preservation is proportion.

When we willfully save things, it may be either representative specimens, or rarities chosen explicitly because they’re rare or “special”. However, in the end, we end up with a sample that no longer represents the original material.

Coin collections disproportionately contain rare dates. Weird and unsuccessful locomotives clutter railway museums. I expect that historians reading email archives in 2250 will see a far lower spam proportion than actually existed.

Capitalism has no interest in preservation except where it is profitable. Thinking about the long-term future, archaeologist’s success and acting on it is not profitiable.

Its not just capitalism lol

Preserving things costs money/resources/time. This happens in a lot of societies.

And a non-capitalist society could decide to invest resources into preservation even if it’s not profitable.

So could a capitalist society?

Ultimately this is a problem that’s never going away until we replace URLs. The HTTP approach to find documents by URL, i.e. server/path, is fundamentally brittle. Doesn’t matter how careful you are, doesn’t matter how much best practice you follow, that URL is going to be dead in a few years. The problem is made worse by DNS, which in turn makes URLs expensive and expire.

There are approaches like IPFS, which uses content-based addressing (i.e. fancy file hashes), but that’s note enough either, as it provide no good way to update a resource.

The best™ solution would be some kind of global blockchain thing that keeps record of what people publish, giving each document a unique id, hash, and some way to update that resource in a non-destructive way (i.e. the version history is preserved). Hosting itself would still need to be done by other parties, but a global log file that lists out all the stuff humans have published would make it much easier and reliable to mirror it.

The end result should be “Internet as globally distributed immutable data structure”.

Bit frustrating that this whole problem isn’t getting the attention it deserves. And that even relatively new projects like the Fediverse aren’t putting in the extra effort to at least address it locally.

I don’t think this will ever happen. The web is more than a network of changing documents. It’s a network of portals into systems which change state based on who is looking at them and what they do.

In order for something like this to work, you’d need to determine what the “official” view of any given document is, but the reality is that most documents are generated on the spot from many sources of data. And they aren’t just generated on the spot, they’re Turing complete documents which change themselves over time.

It’s a bit of a quantum problem - you can’t perfectly store a document while also allowing it to change, and the change in many cases is what gives it value.

Snapshots, distributed storage, and change feeds only work for static documents. Archive.org does this, and while you could probably improve the fidelity or efficiency, you won’t be able to change the underlying nature of what it is storing.

If all of reddit were deleted, it would definitely be useful to have a publically archived snapshot of Reddit. Doing so is definitely possible, particularly if they decide to cooperate with archival efforts. On the other hand, you can’t preserve all of the value by simply making a snapshot of the static content available.

All that said, if we limit ourselves to static documents, you still need to convince everyone to take part. That takes time and money away from productive pursuits such as actually creating content, to solve something which honestly doesn’t matter to the creator. It’s a solution to a problem which solely affects people accessing information after those who created it are no longer in a position to care about said information, with deep tradeoffs in efficiency, accessibility, and cost at the time of creation. You’d never get enough people to agree to it that it would make a difference.

Inability to edit or delete anything also fundamentally has a lot of problems on its own. Accidentally post a picture with a piece of mail in the background and catch it a second after sending? Too late, anyone who looks now has your home address. Child shares too much online and parent wants to undo that? No can do, it’s there forever now. Post a link and later learn it was misinformation and want to take it down? Sucks to be you, or anyone else that sees it. Your ex post revenge porn? Just gotta live with it for the rest of time.

There’s always a risk of that when posting anything online, but that doesn’t mean systems should be designed to lean into that by default.

but the reality is that most documents are generated on the spot from many sources of data.

That’s only true due to the way the current Web (d)evolved into a bunch of apps rendered in HTML. But there is fundamentally no reason why it should be that way. The actual data that drives the Web is mostly completely static. The videos Youtube has on their server don’t change. The post on Reddit very rarely change. Twitter posts don’t change either. The dynamic parts of the Web are the UI and the ads, they might change on each and every access, or be different for different users, but they aren’t the parts you want to link to anyway, you want to link to a specific users comment, not a specific users comment rendered in a specific version of the Reddit UI with whatever ads were on display that day.

Usenet did that (almost) correct 40 years ago, each message got an message-id, each message replying to that message would contain that id in a header. This is why large chunks of Usenet could be restored from tape archives and put be back together. The way content linked to each other didn’t depend on a storage location. It wasn’t perfect of course, it had no cryptography going on and depended completely on users behaving nicely.

Doing so is definitely possible, particularly if they decide to cooperate with archival efforts.

No, that’s the problem with URLs. This is not possible. The domain reddit.com belongs to a company and they control what gets shown when you access it. You can make your own reddit-archive.org, but that’s not going to fix the millions of links that point to reddit.com and are now all 404.

All that said, if we limit ourselves to static documents, you still need to convince everyone to take part.

The software world operates in large part on Git, which already does most of this. What’s missing there is some kind of DHT to automatically lookup content. It’s also not an all or nothing, take the Fediverse, the idea of distributing content is already there, but the URLs are garbage, like:

https://beehaw.org/comment/291402

What’s 291402? Why is the id 854874 when accessing the same post through feddit.de? Those are storage locations implementation details leaking out into the public. That really shouldn’t happen, that should be a globally unique content hash or a UUID.

When you have a real content hash you can do fun stuff, in IPFS URLs for example:

https://ipfs.io/ipfs/QmR7GSQM93Cx5eAg6a6yRzNde1FQv7uL6X1o4k7zrJa3LX/ipfs.draft3.pdf

The /ipfs/QmR7GSQM93Cx5eAg6a6yRzNde1FQv7uL6X1o4k7zrJa3LX/ipfs.draft3.pdf part is server independent, you can access the same document via:

https://dweb.link/ipfs/QmR7GSQM93Cx5eAg6a6yRzNde1FQv7uL6X1o4k7zrJa3LX/ipfs.draft3.pdf

or even just view it on your local machine directly via the filesystem, without manually downloading:

$ acrobat /ipfs/QmR7GSQM93Cx5eAg6a6yRzNde1FQv7uL6X1o4k7zrJa3LX/ipfs.draft3.pdf

There are a whole lot of possibilities that open up when you have better names for content, having links on the Web that don’t go 404 is just the start.

re: static content

How does authentication factor into this? even if we exclude marketing/tracking bullshit, there is a very real concern on many sites about people seeing the data they’re allowed to see. There are even legal requirements. If that data (such as health records) is statically held in a blockchain such that anyone can access it by its hash, privacy evaporates, doesn’t it?

How does authentication factor into this?

That’s where it gets complicated. Git sidesteps the problem by simply being a file format, the downloading still happens over regular old HTTP, so you can apply all the same restrictions as on a regular website. IPFS on the other side ignores the problem and assumes all data is redistributable and accessible to everybody. I find that approach rather problematic and short sighted, as that’s just not how copyright and licensing works. Even data that is freely redistributable needs to declare so, as otherwise the default fallback is copyright and that doesn’t allow redistribution unless explicitly allowed. IPFS so far has no way to tag data with license, author, etc. LBRY (the thing behind Odysee.com) should handle that a bit better, though I am not sure on the detail.

even beyond what you said, even if we had a global blockchain based browsing system, that wouldnt make it easier to keep the content ONLINE. If a website goes offline, the knowledge and reference is still lost, and whether its a URL or a blockchain, it would still point towards a dead resource.

It would make it much easier to keep content online, as everybody could mirror content with close to zero effort. That’s quite opposite to today where content mirroring is essentially impossible, as all the links will still refer to the original source and still turn into 404s when that source goes down. That that file might still exist on another server is largely meaningless when you have no easy way to discover it and no way to tell if it is even the right file.

The problem we have today is not storage, but locating the data.

Why would people mirror some body else’s stuff?

Maybe youd personally do a small number of things if you found it interesting, but i dont see that being very side scale.

Long ago the saying was that “be careful - anything you post on the internet is forever”. Well, time has certainly proven that to be false.

There’s things like /r/datahoarder (not sure if they have a new community here) that run their own petabyte storage archiving projects, some people are doing their part.