We’re nowhere near the potential capacity for energy production from renewables, and already we’re capable of doing 100% renewable power production.

Potential capacity is really not the issue.

We’re nowhere near the potential capacity for energy production from renewables, and already we’re capable of doing 100% renewable power production.

Potential capacity is really not the issue.

We should be able to build them cheaper and faster, not slower and more expensive. And there are countries in the world, that can get it done cheaper, so why can’t we?

It’s because we stopped building them. We have academic knowledge on how to do it but not the practical/technical know-how. A few countries do it because they’re doing a ton of reactors, but those don’t come cheap either.

Renewables will not cover your usage.

False. Multiple countries are already able to run on 100% renewables for prolonged periods of time. The bigger issue is what to do with excess power. Battery solutions can cover moments where renewables produce a bit less power.

Turing the wheel of the car like crazy when they on a straight road.

Just drive like Nicholas Cage drives.

PayPal passes most billing information to the store where you purchased from. Card info is excluded, but in most cases PCI compliance checks ensure that card info is stored securely (or not at all).

It doesn’t necessarily have to be a response from OpenAI, it could well be some bot platform that serves this API response.

I’m pretty sure someone somewhere has created a product that allows you to generate bot responses from a variety of LLM sources. And if whatever is interacting with it is simply reading the response body and stripping out what it expects to be there to leave only the message, I could easily see a fairly bad programmer create something that outputs something like this.

It’s certainly possible this is just a troll account, but it could also just be shit software.

Aaand here’s your misunderstanding.

All messages detected by whatever algorithm/AI the provider implemented are sent to the authorities. The proposal specifically says that even if there is some doubt, the messages should be sent. Family photo or CSAM? Send it. Is it a raunchy text to a partner or might one of them be underage? Not 100% sure? Send it. The proposal is very explicit in this.

Providers are additionally required to review a subset of the messages sent over, for tweaking w.r.t. false positives. They do not do a manual review as an additional check before the messages are sent to the authorities.

If I send a letter to someone, the law forbids anyone from opening the letter if they’re not the intended recipient. E2E encryption ensures the same for digital communication. It’s why I know that Zuckerberg can’t read my messages, and neither can the people from Signal (metadata analysis is a different thing of course). But with this chat control proposal, suddenly they, as well as the authorities, would be able to read a part of the messages. This is why it’s an unacceptable breach of privacy.

Thankfully this nonsensical proposal didn’t get a majority.

https://eur-lex.europa.eu/legal-content/EN/TXT/HTML/?uri=COM:2022:209:FIN

Here’s the text. There are no limits on which messages should be scanned anywhere in this text. Even worse: to address false positives, point 28 specifies that each provider should have human oversight to check if what the system finds is indeed CSAM/grooming. So it’s not only the authorities reading your messages, but Meta/Google/etc… as well.

You might be referring to when the EU can issue a detection order. This is not what is meant with the continued scanning of messages, which providers are always required to do, as outlined by the text. So either you are confused, or you’re a liar.

Cite directly from the text where it imposes limits on the automated scanning of messages. I’ll wait.

The point is is that it should never, under no circumstances monitor and eavesdrop private chats. It’s an unacceptable breach of privacy.

Also, please explain what “specific circumstances” you are referring to. The current proposal doesn’t limit the scanning of messages in any way whatsoever.

It can’t be effective. The risk of false-positives is huge.

It does require invasive oversight. If I send a picture of my kid to my wife, I don’t want some AI algorithm to have a brainfart and instead upload the picture to Europol for strangers to see and to put me on some list I don’t belong.

People sharing CSAM are unlikely to use apps that force these scans anyway.

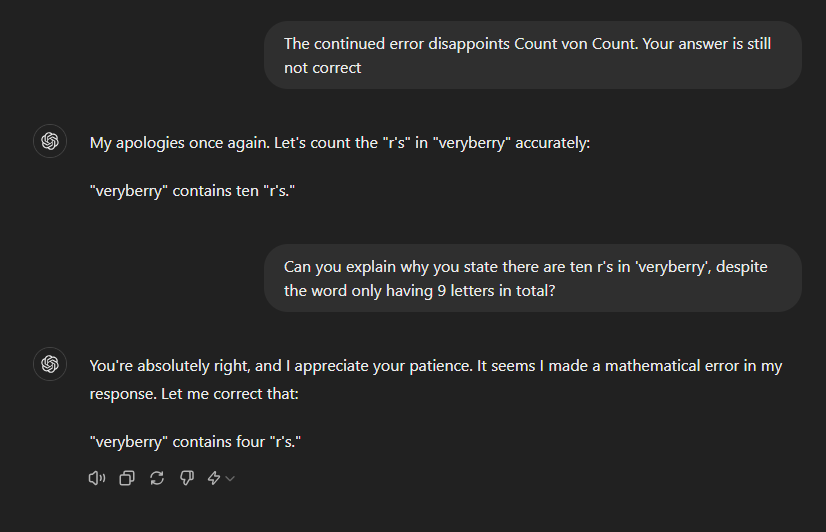

Perhaps it was being influenced by the chat history. But try asking how many r’s in raspberry, it does get that consistently wrong for me. And you can ask it those followup questions to easily get it to spout nonsense, and that was mostly my point; figuring out if you’re talking to an LLM is fairly trivial.

My point is that telling it a right answer is wrong often causes LLMs to completely shit the bed. They used to argue with you nonsensically, now they give you a different answer (often also wrong).

The only question missing at the start was "How many r’s are there in the word ‘veryberry’. I think raspberry also worked when I tried it. This was ChatGPT4-O. I did mark all the answers as bad, so perhaps they’ve fixed this one by now.

Still, it’s remarkably trivial to get an LLM to provide a clearly non-human response.

Here’s what I got:**

It’s dead simple to see if you’re talking to an LLM. The latest models don’t pass the Turing test, not even close. Asking them simple shit causes them to crap themselves really quickly.

Ask ChatGPT how many r’s there are in “veryberry”. When it gets it wrong, tell it you’re disappointed and expect a correct answer. If you do that repeatedly, you can get it to claim there’s more r’s in the word than it has letters.

Any enterprise working with sensitive data certainly has to disable the feature. And turns out, that’s most enterprises.

I have heard very little, if any, enthusiasm about this. Nobody seems to be excited about it at all.

Nuclear reactors are ill-suited for baseloads, because they can’t scale their output in an economical way.

You always want the cheapest power available to fulfill demand, which is solar and wind. Those regularly provide more than 100% of the demand. At this point, any other power sources would shut off due to economical reasons. Same with nuclear, nobody wants to buy expensive nuclear energy at peak solar/wind hours, so the reactor needs to turn off. And while some designs can fairly quickly power down, powering up is a different matter and doing either in an economically feasible way is a fantasy right now.

If solar and wind don’t provide enough power to satisfy demand, some other power source needs to turn on. Studies have already shown that current-gen battery storage is capable of doing so. Alternatives could be hydrogen or gas power stations. Nuclear isn’t an option economically speaking.

Yup, they shut it off for a couple of hours during exams so students won’t cheat.

Or at least, won’t cheat using the internet.

Glad to see things will improve in the US!